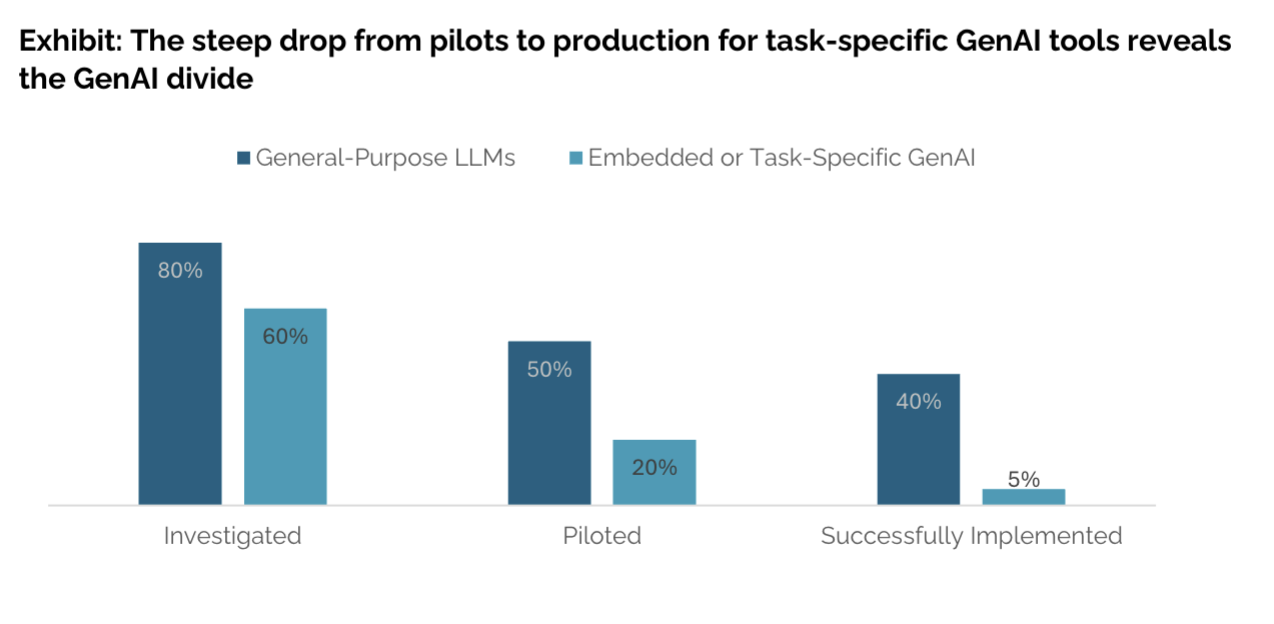

Generative AI is no longer a future bet—it’s already embedded in daily work. Over 80% of organizations have piloted tools like ChatGPT or Copilot, and nearly 40% report official deployment (MIT Project NANDA, 2025) .

But adoption isn’t the same as transformation. Despite $30–40 billion invested in GenAI initiatives, 95% of organizations are seeing no measurable ROI from their pilots. MIT researchers call this gap the GenAI Divide: the difference between widespread experimentation and meaningful business outcomes.

Boards and CEOs are pushing aggressive AI strategies. In fact, 99% of executives expect further GenAI investment in the next two years (NTT Data, 2025). Yet policies lag: 72% of organizations still lack a formal GenAI usage policy, and 45% of CISOs remain cautious or negative about adoption.

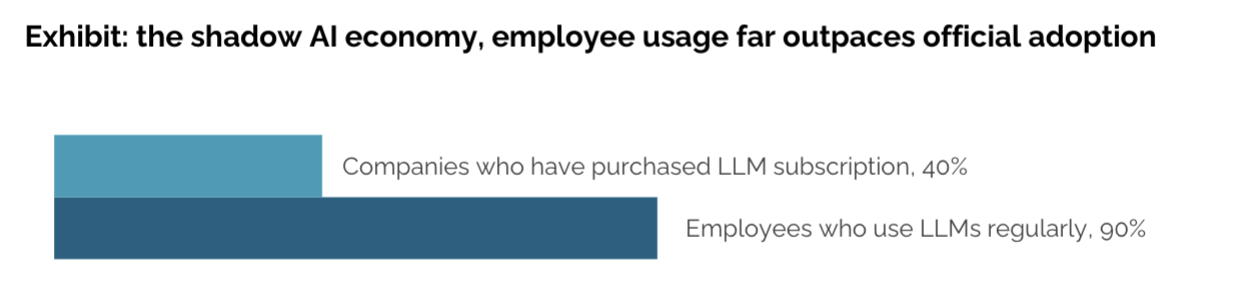

Meanwhile, employees aren’t waiting. Research shows that while only 40% of companies have purchased official AI subscriptions, employees at 90% of organizations already use personal AI tools for work. This “shadow AI economy” demonstrates that banning tools doesn’t prevent usage—it just makes it invisible.

Some organizations default to bans. But blocking GenAI tools doesn’t work—employees find workarounds, and the business loses out on potential productivity gains.

The smarter starting point is observability: see what’s really happening before deciding how to intervene.

Visibility is foundational.

Organizations that start with visibility understand who is using AI, how it’s being used, and where risks or opportunities appear. This shifts governance from being a constraint to being a conversation.

Policy follows behavior.

Real-time analytics help refine governance by department, role, or use case—grounded in actual usage, not assumptions.

Rollout ≠ adoption.

Licensing a tool doesn’t guarantee it’s used effectively. Without prompt-level insight, investment decisions are made in the dark.

AI governance is how organizations oversee the responsible and effective use of AI. It includes policies and controls, but also enablement and training. Governance is not just compliance—it’s how AI becomes trustworthy and useful.

And governance doesn’t start with control. It starts with understanding actual usage:

Governing GenAI requires observability at the point of interaction: the prompts, the data, and the outcomes.

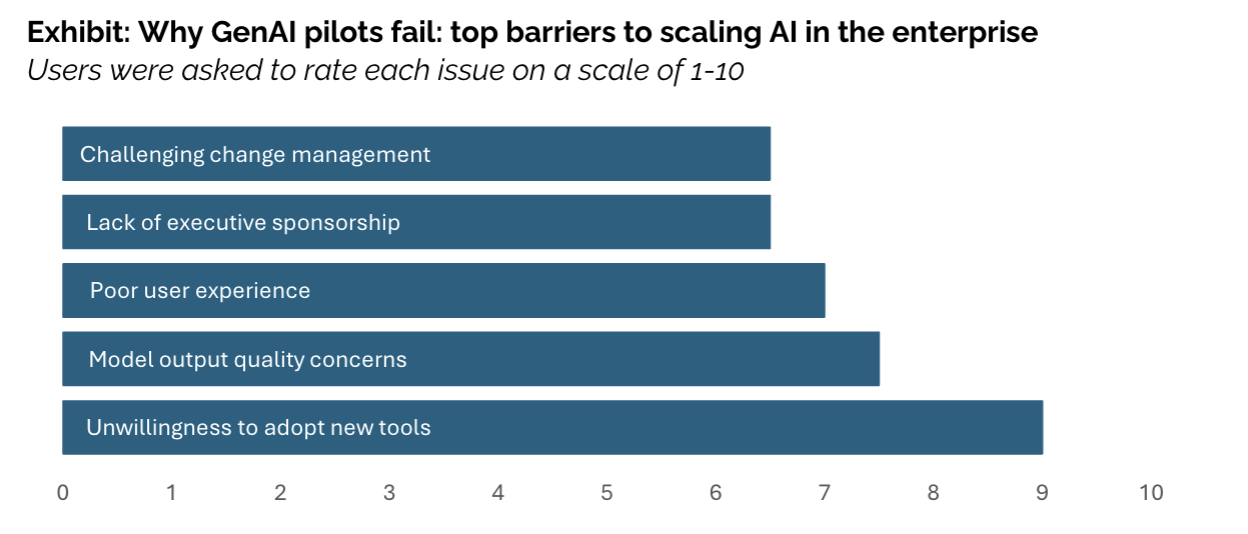

MIT research found the biggest barrier isn’t infrastructure or regulation—it’s the learning gap. Most enterprise tools don’t adapt, remember, or integrate well into workflows.

That’s why employees often prefer consumer tools like ChatGPT: they’re flexible and familiar, even if not enterprise-ready. The organizations that succeed demand tools that can adapt to their processes and improve over time.

The most effective AI strategies don’t start with control—they start with visibility, then evolve:

Governance isn’t a toggle—it’s a process of informed enablement that grows with your teams.

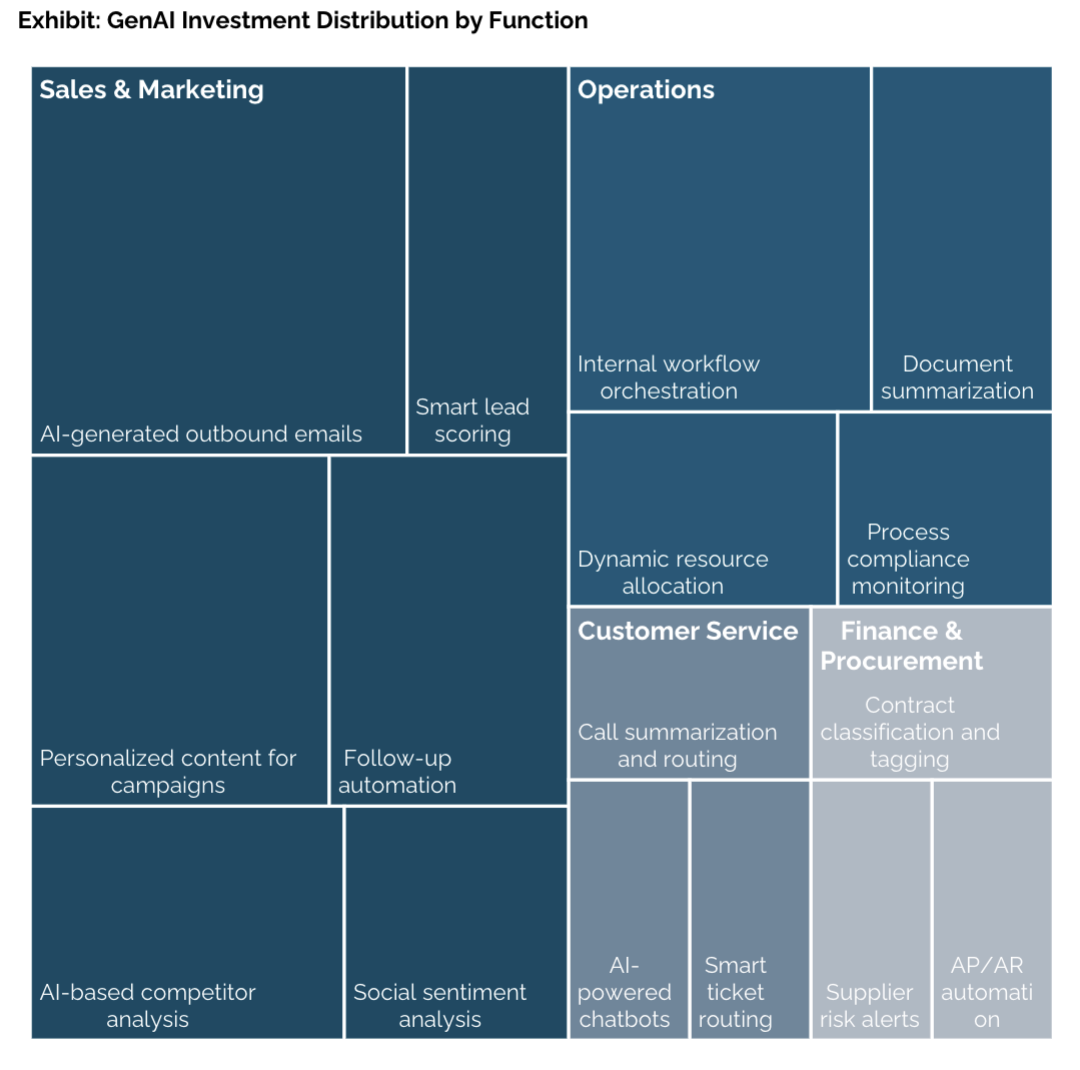

Executives often funnel AI budgets into sales and marketing because outcomes are easy to measure. In fact, nearly 70% of AI budgets go to these functions. But the biggest ROI often comes from overlooked back-office automation: eliminating BPO contracts, cutting agency spend, streamlining compliance workflows.

[Recommended Screenshot/Chart: “GenAI Investment Distribution by Function” chart (pg. 9–10).]

This would make a strong visual to show the mismatch between where budgets go vs. where value is found.

Form an AI governance committee that includes IT, security, legal, and business leaders. But don’t start with theory—start with data:

MagicMirror helps you baseline this usage immediately, so your decisions are grounded in fact rather than assumption.

MagicMirror provides:

We’ll help you see how AI is really being used, surface exposures, and align governance with how AI actually fits into your workflows.

With the right visibility, safeguards, and enablement in place, you can scale AI confidently—faster than blocking, safer than guessing.